The Memory and Flash Supercycle is not a quarterly blip. DRAM prices are projected to climb 171% year-over-year through 2027, and the conditions driving those increases show no sign of easing after that. AI infrastructure demand continues to accelerate. DDR4 fabrication capacity is not coming back. NAND flash contract prices jumped 55–60% in Q1 2026 alone. Nearline HDD shortages driven by AI inference demand are pushing organizations toward flash faster than planned. Server lead times stretch into months. Industry analysts project tight supply conditions through at least 2028, with some forecasts extending constrained markets into 2030 and beyond. Every component in the data center costs more than it did a year ago, and the forces driving those increases are structural, not cyclical.

Key Takeaways

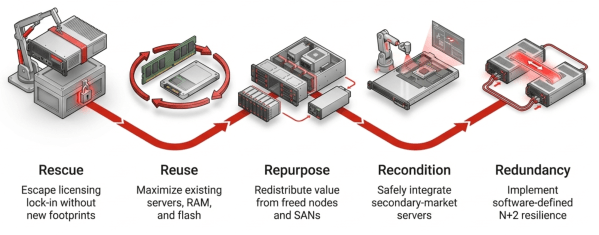

- Rescue — Broadcom’s VMware licensing changes compound the supercycle’s hardware cost increases. A private cloud platform that abstracts from the physical layer lets organizations migrate off VMware without buying new infrastructure.

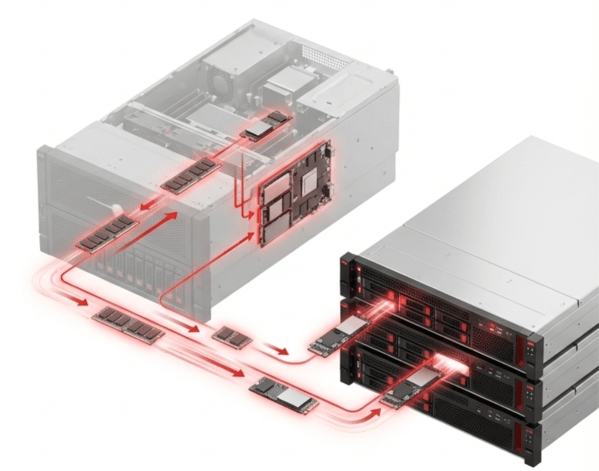

- Reuse — A platform that installs on existing servers keeps current RAM, SSDs, and drives in production. Software-managed storage lets organizations run safely on commodity NVMe drives instead of paying enterprise SSD premiums that continue to widen.

- Repurpose — Consolidating to fewer servers frees hardware for reuse as data protection nodes or parts donors. Aging SANs can be stripped and reloaded with more efficient software. Mixed node types and roles in the same cluster make redistribution practical.

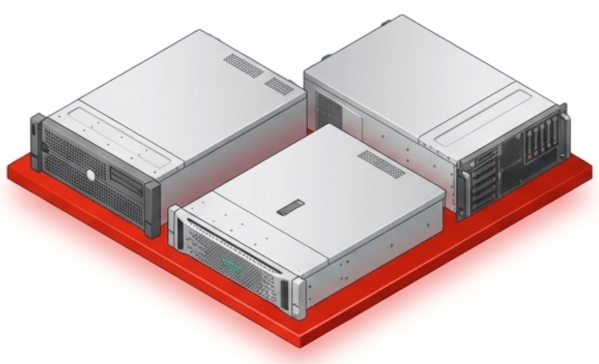

- Recondition — With new server prices inflated and lead times stretching months, reconditioned servers at a fraction of the cost become a serious procurement strategy. Private cloud abstraction removes CPU generation and brand as constraints.

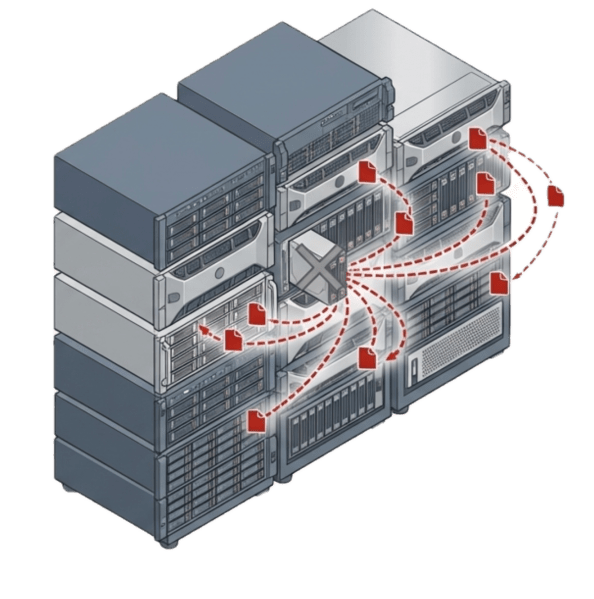

- Redundancy — Older and mixed-generation hardware carries higher failure risk. The platform must deliver N+2 or greater protection built in and included in the license. Bolting on third-party backup and replication to protect a cost-optimized infrastructure defeats the savings.

This is the environment that makes private cloud infrastructure the defining architectural decision of the next five years. Not a marketing term for a VMware cluster behind a firewall, but a real strategy. A true private cloud is a software-defined infrastructure designed to run on commodity hardware from multiple vendors. It abstracts workloads from specific server brands, CPU generations, and drive classes. The organizations that weather the supercycle will be the ones running on that kind of platform. They will live by five words: rescue, reuse, repurpose, recondition, and redundancy.

Key Terms

- Memory and Flash Supercycle — A sustained period of rising DRAM, NAND flash, and HDD prices driven by AI infrastructure demand, DDR4 end-of-life, and constrained fabrication capacity. Industry analysts project tight supply through at least 2028, with some forecasts extending into 2030 and beyond.

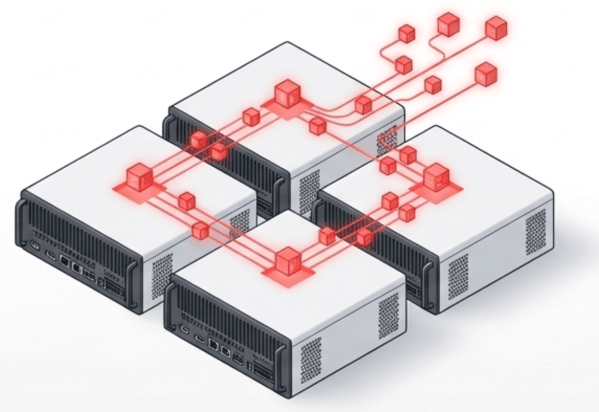

- Private Cloud — A software-defined infrastructure designed to run on commodity hardware from a variety of vendors. Differs from a VMware cluster or orchestrated stack by integrating virtualization, storage, networking, and data protection into a unified platform rather than coordinating separate products.

- Hardware Abstraction — The ability of a platform to operate independently of specific server brands, CPU generations, or drive classes. Enables organizations to mix vendors, reuse older hardware, and deploy reconditioned servers without compatibility constraints.

- Commodity NVMe — Standard NVMe solid-state drives that cost significantly less than enterprise or server-class SSDs. Private cloud platforms that manage storage at the software layer make commodity drives production-safe through wear management, deduplication, and software-defined replication.

- N+2 Data Protection — A redundancy level that sustains two simultaneous component failures without data loss or downtime. Critical for environments running older, mixed-generation, or reconditioned hardware where failure probability is higher.

- Reconditioned Server — A previously deployed server, typically one or two generations behind current models, refurbished and resold at a fraction of the original price. Private cloud abstraction makes generation and brand irrelevant, turning reconditioned servers into viable production assets.

- Parts Donor — A server freed by workload consolidation whose DRAM modules and NVMe drives are redistributed across the active production cluster. Requires a platform that supports mixed node types and mixed hardware specifications.

Rescue Your Organization from Licensing Lock-In

The first priority is operational. Broadcom’s restructuring of VMware licensing created an urgency that predates the supercycle but now compounds it. Organizations locked into VMware face rising software costs on top of rising hardware costs. The rescue is a migration to a platform that eliminates the licensing pressure and, just as importantly, reduces the hardware footprint required to run the same workloads. A private cloud with an efficient hypervisor can lower RAM consumption.

A private cloud platform that abstracts the hypervisor, storage, and networking from the physical layer gives organizations the freedom to move without buying new infrastructure to move onto. The rescue happens on existing hardware.

Reuse the Hardware You Already Own

Reuse is the natural extension of rescue. When a private cloud platform installs on the servers already in the rack, those servers stay in production. The RAM stays. The SSDs stay. There is no forklift upgrade during a period when forklift upgrades cost 30–50% more than they did two years ago.

Reuse also applies to flash. With NAND prices climbing and QLC SSDs poised for a breakout year, organizations need platforms that can safely run on commodity NVMe drives rather than requiring enterprise-class SSDs. Software-managed wear leveling and inline deduplication to reduce writes make commodity flash a viable production choice. The cost difference between enterprise and commodity SSDs is widening, not narrowing. Platforms that demand enterprise drives force organizations to pay the premium. A private cloud that manages storage at the software layer lets organizations reuse whatever flash makes economic sense.

Repurpose Freed Servers and Storage

When a more efficient platform consolidates workloads onto fewer servers, the freed hardware has immediate value. One server becomes a dedicated data protection node. The rest become parts donors. Pull the DRAM modules and NVMe drives and redistribute them across the active production cluster. A private cloud that supports mixed node types and roles within the same cluster makes this redistribution practical without requiring matching hardware specifications.

Repurposing also applies to storage arrays. An aging SAN that no longer justifies its maintenance contract can be stripped of its drives and reloaded with software that delivers better performance and efficiency. The enclosure, the drives, and the network connections stay in production under new management.

Recondition Older Servers into Production Assets

The supercycle makes reconditioned servers a serious procurement strategy. When new servers carry inflated prices and multi-month lead times, a two-generation-old server at a fraction of the cost becomes attractive. Private cloud infrastructure built on commodity hardware abstraction does not care about CPU generation or server brand. A reconditioned Dell server runs alongside a current-generation HPE server. Each node takes whatever role the cluster needs: compute, storage, or both.

Build Redundancy into the Platform, Not onto the Invoice

A strategy built on reuse, repurposing, and reconditioned hardware only works if the platform can absorb the higher failure rates that come with older equipment. An aged drive wears faster. A reconditioned power supply has more hours on it. A repurposed server pulled from a decommissioned cluster was not built for another five-year tour. The hardware economics of the supercycle push organizations toward older and mixed-generation gear. The availability architecture has to account for that.

This is where redundancy becomes a defining requirement, not an optional add-on. The platform must deliver N+2 or greater protection to handle multiple simultaneous component failures without data loss or downtime. Traditional RAID controllers do not meet this bar. They protect against one or two drive failures within a single enclosure, they add cost and consume RAM, and they are themselves a single point of failure. During a supercycle, RAID controllers are also increasing in price and lead time, adding another line item to an already inflated bill of materials.

The redundancy has to be software-defined, built into the platform, and included in the license. Snapshot-driven replication at the storage layer protects data without depending on dedicated hardware. If a drive fails, the platform rebuilds from distributed copies across the cluster. If a node fails, workloads restart on surviving nodes with access to the same replicated data. The protection spans the entire cluster, not a single shelf. Organizations that bolt on third-party backup and replication products to protect a cost-optimized private cloud infrastructure end up spending what they saved. One license, one code base, one operational model.

The Private Cloud Moment

The supercycle did not create the need for private cloud infrastructure. It removed the last excuse for avoiding it. Organizations that abstract their workloads from specific hardware gain the flexibility to rescue themselves from licensing traps, reuse what they own, repurpose what they free up, recondition what they buy, and protect all of it with redundancy that does not add cost. VergeOS is a first-of-its-kind private cloud operating system that differs from other private cloud and software-defined infrastructure solutions because it integrates all typical infrastructure services into a single, unified codebase. It runs on any x86 hardware, provides data protection, and incurs a 2–3% memory overhead. The infrastructure decisions made in 2026 will define costs through 2031. The next five years belong to organizations that treat their hardware as flexible inventory, not fixed commitments.

Frequently Asked Questions

- What is the Memory and Flash Supercycle? — A sustained period of rising DRAM, NAND flash, and HDD prices driven by AI infrastructure demand, DDR4 end-of-life, and constrained fabrication capacity. DRAM prices are projected to increase 171% year-over-year through 2027, with tight supply conditions expected to extend into 2030 and beyond.

- Why is private cloud infrastructure relevant now? — Three forces converge: VMware licensing pressure from Broadcom, component cost inflation across RAM, flash, and servers, and technology maturity enabling truly integrated platforms. Organizations need to reduce hardware footprints and extend the life of existing equipment, which requires a platform that abstracts from specific hardware.

- Can I migrate from VMware without buying new servers? — Yes. A private cloud platform that supports hardware abstraction installs on the servers already in the rack. Node-by-node migration moves workloads off VMware without a parallel environment or a maintenance window. The servers, RAM, and SSDs you already own stay in production.

- Is it safe to run commodity NVMe drives in production? — With the right platform, yes. Software-managed wear leveling and inline deduplication reduce total writes to each drive, extending drive life. Software-defined replication at N+2 or greater protects against multiple simultaneous drive failures without hardware RAID, so a commodity drive that wears faster is replaced gracefully.

- What happens to servers freed up after consolidation? — One freed server becomes a dedicated data protection node. The remaining servers become parts donors. Pull the DRAM modules and NVMe drives and redistribute them across production nodes. A platform that supports mixed node types and roles makes this practical without matching hardware specifications.

- Are reconditioned servers a viable option during the supercycle? — Yes. When new servers carry inflated prices and multi-month lead times, a two-generation-old server at a fraction of the cost becomes a serious procurement strategy. Private cloud abstraction removes CPU generation and brand as constraints, so reconditioned hardware runs alongside current-generation equipment.

- Why does redundancy need to be built into the platform? — A strategy built on reuse, repurposing, and reconditioned hardware carries higher failure risk from older equipment. The platform must deliver N+2 or greater protection included in the license. Organizations that bolt on third-party backup and replication to protect a cost-optimized infrastructure end up spending what they saved.

- How long will the supercycle last? — Industry analysts project tight supply conditions through at least 2028, with some forecasts extending constrained markets into 2030 and beyond. The forces driving the supercycle are structural, not cyclical. Infrastructure decisions made in 2026 will define costs through 2031.

Leave a comment