For many enterprises, Solid State Disks (SSD) should no longer be a future consideration and they are visualizing SSD at work in their data center. Many environments can take advantage of SSD right now and the organization can benefit from the inherent performance improvements that SSDs offer, such as increased revenue generation, customer satisfaction, and employee productivity. The jump to SSD is not a shot in the dark; users can make an informed decision by utilizing common utilities in the data center and a little visual guidance on what to look for.

Prior to running any tests or benchmarks, the first question to ask is: Are there applications in the environment that would produce greater benefit for the enterprise if their performance was improved? Types of benefits might include such things as orders processed more quickly, improved customer satisfaction, or customers not leaving your web site because they are tired of waiting (only takes a few seconds now days). Or can your employees generate more digital work if they do not have to wait on the IT environment to respond, or wait for a simulation job to run for? If the answer to any of these questions is yes, then the time to look at SSD is now.

Unlike in the past when SSD price was an issue, it is no longer necessary to consider only applications that are extremely bottlenecked, nor do they have to be segment-able, meaning there are specific components of the application that could be moved to high speed SSD. The cost of SSDs is now such that even applications that could see a modest performance gain with a modest increase in productivity or revenue are viable candidates. These applications no longer have to be sophisticated databases where only specific files require moving to SSD. The size of the modern SSD is such that in some cases the entire application and all its data can be moved to the high-speed platform.

These days, any application that is storage I/O bottlenecked and could benefit the organization if it performed better is an SSD candidate.

For the purposes of this article we will use screenshots from Windows Perfmon to demonstrate the characteristics to examine when evaluating the viability of SSDs. We will show a series of before and after SSD performance results. For these Perfmons, nothing else changed in the environment other than the insertion of an SSD system.

These screenshots, provided by Texas Memory Systems, are based on real customer data; the customer in question is an online gaming platform with thousands of concurrent users. Each user or player pays to use the service, so game response time is critical for customer retention. A positive, responsive gaming experience leads to a customer that not only will continue to renew their membership but also refer other players to the service.

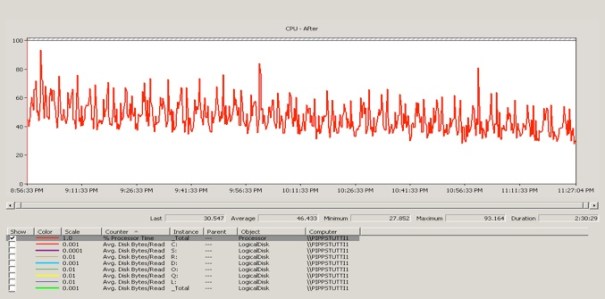

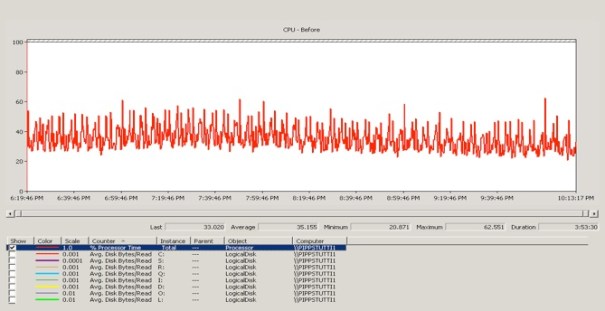

Once a candidate application has been identified, the next step is to make sure the application is indeed storage I/O bound. First, look at processor utilization. There are three immediate areas that indicate a storage I/O problem: CPU utilization, bandwidth utilization, and disk I/O’s per second (IOPS). The first and probably most telling step in this process is to examine CPU utilization.

What we are looking for here is relatively low CPU utilization; below 50% indicates a problem, below 30% is not uncommon. If processor utilization is only 30%, that means it is waiting on something else 70% of the time. Most often, it is waiting on storage.

If CPU utilization is already high, 60% or higher, then stop; it is unlikely at this point that there is a storage related performance bottleneck. If there are faster processing capabilities available, scaling up in compute power is the next logical course of action. After that, the decision to scale out by adding multiple servers to the application and possibly compartmentalizing it further across those servers is the next course of action.

In the “before” Perfmon screen shot above you can see that the CPUs in the environment were not under a great deal of stress at all, with each averaging about 35%. Clearly this is a candidate for Solid State Disk.

After the insertion of SSDs, there was a dramatic increase in CPU utilization to 46%, close to a 50% improvement in CPU utilization. With SSD you will find that I/O performance should scale linearly with CPU load.

The goal of SSD is to make the CPU the bottleneck, essentially moving the performance problem back to the CPU. At that point there are two options; first increase the processing capabilities of the environment by purchasing faster processors, or re-write the code to be more efficient in its use of the available processing power.

The goal of SSD is to make the CPU the bottleneck, essentially moving the performance problem back to the CPU. At that point there are two options; first increase the processing capabilities of the environment by purchasing faster processors, or re-write the code to be more efficient in its use of the available processing power.

There are times, especially with a constant user load such as 1,000 gamers for example, where there may not be a dramatic increase in I/O performance after SSDs are deployed. But in this instance there will almost certainly be a dramatic decrease in response time.

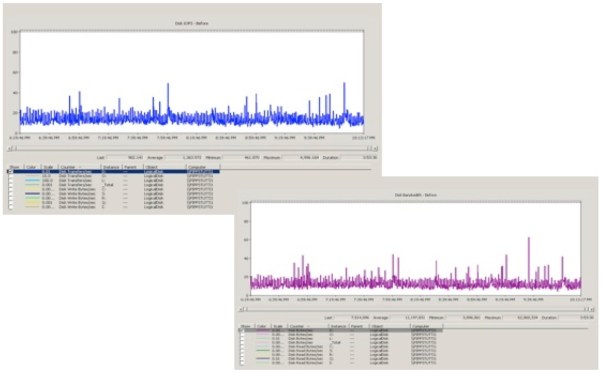

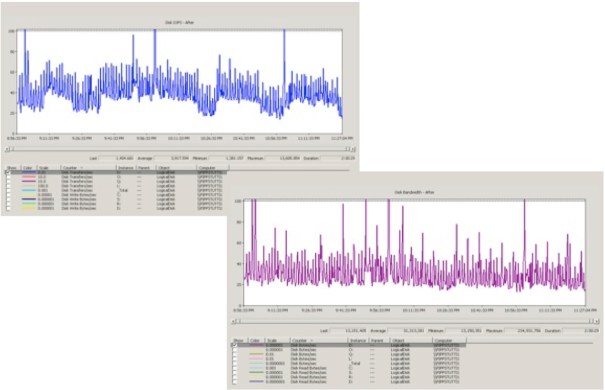

In addition to the CPU, it also is advisable to look at disk IOPS and disk bandwidth. Notice in both the disk bandwidth and disk IOPS Perfmons the near flatline of the measurement. This indicates that performance has hit the wall.

Notice in the after screenshots that the measurements are more scattered. This indicates that there is plenty of available performance and bandwidth for the applications.

If after running these measurements and before implementing SSD you have low CPU utilization (the before CPU utilization screenshot) but scattered disk IOPS (as in the after Disk IOPS measurement), then it is probably due to a network issue. Basically the CPU is waiting on something but it is not storage.

After identifying a performance problem, it makes sense to determine if you can address your storage I/O issue by improving your mechanical drive infrastructure or if it makes more sense to implement SSD.

For the complete detailed visual guide on the rest of this procedure please complete the form below:

[…] performance is almost an art. One of the earliest papers I wrote for Storage Switzerland was “Visualizing SSD Readiness“, which articulated how to determine if your application could benefit from implementing solid […]