There are two well-known facts about solid state disk (SSD). First, SSD is fast and second, SSD is expensive—compared with traditional disk drives. There are also two well-known facts about the data center. First, almost every data center needs more performance, and second, almost every IT manager is dealing with either a flat or shrinking budget. As a result, SSD needs to be implemented in an efficient manner, one that properly balances performance and cost.

The need for performance has most IT managers considering SSD when they evaluate new storage systems. However, the cost of flash and the realities of the IT budget either push SSDs out of consideration quickly or limit its use to only the most performance-sensitive and highest revenue-generating applications. Broad adoption is going to require achieving true SSD efficiency in order to bring down this cost.

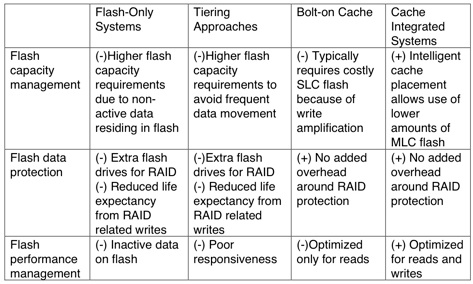

Today’s flash implementation options include flash-only storage systems, systems that use flash SSD as a tier, or storage systems that use flash as a cache. The question to ask is: “Which of these solutions is the most likely to achieve maximum SSD efficiency?”

To this end, there are typically three areas where storage vendors focus their efforts: 1) efficient flash capacity management, 2) efficient flash data protection or 3) efficient flash performance management. Achieving maximum efficiency in each of these areas can enable a storage vendor to deliver a new level of cost-effective performance to data center applications, especially in virtualized environments.

Efficient Flash Capacity Management

Flash-Only Systems With Deduplication

Flash-only systems eliminate the presumed complexity of managing multiple storage tiers by eliminating the tiers themselves. The goal of these systems is to provide a flash-only storage solution that is in the same price range as name brand storage solutions from legacy storage vendors configured with high-speed (15k RPM) drives. To accomplish this goal they typically have efficiency features such as deduplication and compression built in to make the premium priced flash systems more cost effective.

Deduplication is the most common method that flash vendors use to eliminate the price gap between mechanical hard drives and flash-based storage. Deduplication is the process of segmenting data and identifying redundant patterns between the segments. Its initial implementation was in the backup environment to allow disk based backup systems to become more price competitive when compared to tape. While it is feasible to get up to 20x data reduction with dedupe in backup environments, primary workload data will not typically have anywhere near this level of redundancy. Even in the best-case scenario, a virtualized server environment may only produce efficiency rates in the 5X range and in other environments it may be as low as 2x or less.

This creates a problem for pure solid state storage solutions. In this scenario, non-active and non-critical data end up residing in expensive flash. There is a performance penalty due to the deduplication process. Worst of all, capacity savings from dedupe and compression technologies may not be enough to offset the costs of the flash SSD.

Thus, while these systems may be appropriate for high-end, performance-sensitive environments, they may not always be the best choice for the average datacenter workload requiring cost-effective performance.

Using Less Flash by Leveraging Hard Disk Drives

Another way of reducing costs is to consume less flash by only using it for the most active data sets. There are two approaches to these “use less” methods.

The first is to use the flash SSD as a tier of storage and to either manually or, with newer systems, automatically move data to that tier as it’s being accessed.

The problem with this approach is that new data is written to the hard drive storage tier first. Then, over time as it’s being accessed, the data is promoted to the SSD tier. This means that for a period the user must live with hard disk performance constraints, at least until the data is promoted to the flash-based tier. This process happens continually as data is moved into and out of the flash storage tier. If care is not taken about how data is written to that tier then the SSD can wear out prematurely. On the flip side, if data does not move frequently, the system may be unable to respond to fluctuating workloads. To prevent this from happening storage vendors may size the cache tier larger than it needs to be so that the cache turnover rate is lower.

The second “use less flash” method is caching. The typical approach is to bolt cache onto a traditional storage architecture. With this model, data is temporarily placed on solid state storage while it’s being accessed frequently. Unlike automated tiering technologies, caching creates a second copy of the data; it’s not the only copy. This means that none of this premium-priced capacity needs to be wasted on data protection schemes like mirroring or RAID. It also means that other data protection processes, like snapshots, are not using flash capacity to store changed block information.

Caching, like automated tiering, then uses a hard disk tier to store the less active data. While it’s fair to be concerned over either a cache or tier “miss” the likelihood of that happening, thanks to the high capacities of flash based storage, is relatively small; and can be virtually eliminated by scaling up the cache ratio. But the chances of data becoming inactive and never being accessed are very high. Having inactive data only on SSD, optimized or not, can’t be considered efficient. Both of the other methods are more susceptible to this occurrence of flash storage being filled with less active data.

The challenge that caching solutions have historically had, is that thus far they’ve been add-ons or bolt-ons to existing storage systems. Few storage systems have been developed to take full advantage of flash sized caching capabilities or have integrated those capabilities into a turnkey storage system. Nimble Storage Systems is one of the first vendors to write a file system from the ground up designed specifically to benefit from a high-capacity flash-based caching tier and help create a new storage system category; cache integrated storage systems.

Efficient Flash Data Protection

One of the challenges with flash-only storage systems, as well as systems that use flash as a persistent tier of storage, is that it’s incumbent upon them to protect the data that they store. This means the implementation of some sort of RAID algorithm or possibly mirroring to make sure that a failure of a flash device does not result in data loss.

Since there is no other tier, flash-only systems must use either mirroring or RAID 5 or 6 to protect against a drive failure. While the cost of consuming extra drives in the hard disk world is relatively insignificant, consuming an extra flash drive or two for data protection can add substantially to the overall cost of the system. This also means that other data protection techniques like snapshots and clones must keep their secondary data copies on the premium priced, flash-based storage as well again consuming expensive flash capacity for non-critical data.

Another problem with the providing data protection on the flash tier is that it may also reduce life expectancy due to the extra write activity that any data protection algorithm will require. For example, in a RAID 5 configuration one extra parity bit is written, in a RAID 6 configuration two are written. These additional writes occurring continuously can reduce life expectancy of the SSD devices.

Cache integrated systems like those provided by Nimble Storage don’t have have to worry about providing data protection for their flash storage area since it is a cache based system. All new data is written through the cache and no data is put at risk. These systems only use the cache area to store copies of data that are already resident on hard disk so that subsequent accesses to that data can be accelerated. No capacity is wasted on data protection nor are extra write cycles wasted in the parity process.

Since the cache is integrated into the file system, systems like Nimble’s can take advantage of the cache to optimize write activity. In fact, Nimble uses a combination of NVRAM and flash. Small random writes are first stored in mirrored NVRAM where they are coalesced into a larger sequential writes as they are written to flash and to high-capacity hard disk. The sequential writes not only improve write performance, but also increase the life expectancy of that flash.

Efficient Flash Performance Management

An advantage that flash-only storage systems have is that there is no performance management to be done since all data resides on flash. The problem is that not all data is justified residing on flash and the systems have no mechanism to store them to an HDD-based tier automatically.

Systems that use flash as a tier with some sort of automated tiering technology have a significant performance management challenge. These systems have to analyze data not just for most recently active but also to make sure it’s “flash justifiable” and then move that data to the flash tier. This requires significant background processing to perform the analysis as well as consumption of the storage required to move data back and forth between the flash and HDD tiers. This extra processing may overwhelm the storage controllers because, as a new process that was bolted on to the storage system’s code, it’s not typically as efficient as something built from the ground up.

Cache integrated systems that were built from the ground up with the knowledge of how flash-based storage works can be designed in such a way to leverage flash as a cache and have its use integrated into the overall file system. This type of implementation will lead to a storage system with much greater performance and capacity efficiency.

Summary

Efficient flash management is more than just making sure that the maximum capacity of the flash-based storage can be achieved. While capacity efficiency is critical, efficient flash designs should also factor in the cost of providing data protection and efficient management of performance in the system. By combining these three factors, cache-integrated systems like those offered by Nimble Storage are able to provide highly efficient, turnkey products that leverage the best of flash and the best of HDD technology to deliver a solution that solves real-world, mid-range data center problems.

Nimble Storage is a client of Storage Switzerland