When an organization buys a scale-up storage system it has to predict how much storage performance and capacity it will need over the life of the investment. If an unpredictable requirement for either of these resources occurs then IT will need to either upgrade or replace the system long before it reaches its life expectancy. Scale-out storage resolves this problem by incrementally adding capacity and performance as the organization needs. But scale-out storage has its own challenges, especially concerning organizations with multiple sites. To solve the multi-site problem a new form of storage infrastructure is available; distributed storage.

The Challenges with Scale-Out Storage

Scale-out storage systems do an excellent job of managing capacity and most can meet total storage requirements for most organizations. However, there are concerns for organizations with multiple locations looking to distribute data either for collaboration or disaster recovery. The typical scale-out implementation for multiple-sites is to create a scale-out storage cluster in each location and then to replicate data between them. Sometimes this is built into the array, sometimes you need to buy separate replication infrastructure. Both are designed to copy data to each site but how practical are they to use? Replicated storage clusters requires the IT department to manage and monitor multiple clusters and replication jobs. There is also the expense of having to pay for each cluster’s licensing.

For collaboration, scale-out replication also does not ensure data integrity because there is no global file locking between systems. In other words, a data set could be manipulated in two sites at nearly the same time but the system will save the changes a user makes at only one of the sites. Basically, whoever saves last wins.

It seems scale-out replication would satisfy the disaster recovery requirement for most organizations but it does so with some complication. First, specific volumes need to be placed in an active state. Then, replication jobs need to be stopped or reversed and finally applications need to point to a new volume. Obviously for a disaster, where there is no other choice, going through these steps is worth it but it limits DR to worst case scenarios. But what if you want to employ more sophisticated capabilities? For example, what if an organization wants to move an application for maintenance or as a follow the sun strategy?

Distributed Storage

On its surface, distributed storage looks like scale-out storage, except its design is to operate in multiple locations as a single cluster, simplifying storage management. The distributed storage system is aware of the location of each node in its cluster and IT professionals can specify how many copies of data are contained at each location throughout the organization. If a file is accessed in one location, then it is locked for all locations until it is released. If there is a disaster, an application can restart at an alternate location, and access its data the same way it always has. The application is, after all, connected to the same storage cluster. This ease of connectivity and management also means that applications can easily move between locations for more routine maintenance or testing tasks.

The Need for Data Distribution is Growing

New technologies like VMware stretched clusters (or even formally vSphere Metro Storage Clusters) and new container and orchestration systems (e.g. Docker + Kubernetes) will only accelerate the need for distributed storage. The frameworks are now in place to move VMs or containers to any location that the organization chooses. Distributed storage is the missing link.

StorageSwiss Take

Distributed storage moves an organization from disaster recovery to high availability. The storage system not only facilitates recovery from a disaster but also application mobility and organizational collaboration.

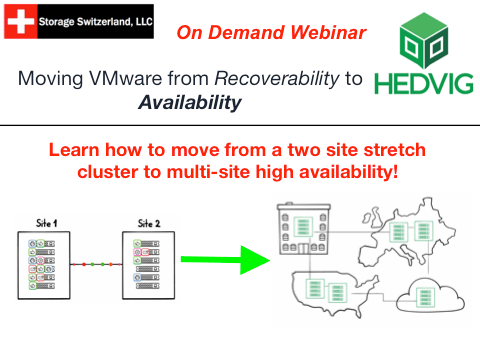

In our on demand webinar, “Moving VMware from Disaster Recovery to High Availability“, we compare how a distributed storage infrastructure can enhance and leverage VMware’s stretch cluster features so all of an organization’s data centers — and even expanding to public clouds — can be utilized to enable an organization to provide availability instead of just recoverability.