Access to almost limitless, on-demand compute and storage means public cloud providers like Amazon, Google and Azure should be a factor in the strategy of almost any sized enterprise. Most experts recommend a hybrid approach, but the problem is data has gravity, and overcoming the cloud’s data gravity problem is critical to hybrid cloud success.

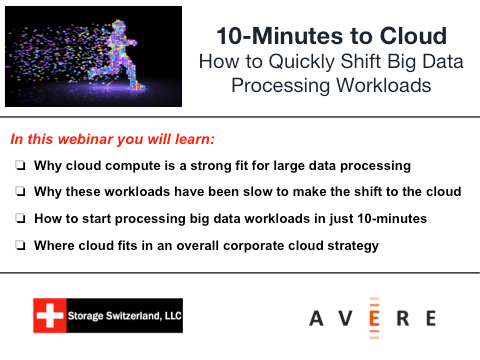

In this StorageShort, Storage Switzerland and Avere Systems discuss data gravity and why it is such a challenge for public cloud providers.

The cloud is very good at temporal tasks. It can apply 1,000 processing cores at an analytics job making it faster to find an answer or increasing the amount of data it can analyze. Then when the jobs are done, IT can turn those cores off. The problem is data doesn’t work like that. It very rarely shrinks, it is always there and continues to grow.

One way to deal with the data gravity problem is to just put all the organizations data in the cloud, by either moving all workloads to the cloud or copying all data to the cloud. The problem is moving all data to the cloud and keeping it there for a long time gets expensive. Those periodic payments start to surpass the cost of the organization storing its data on-premises. Also in many cases moving all data to the cloud does not mean eliminating all data from on-premises.

A solution is to establish a cloud-based cache and only move the data to the cloud that needs to be there at any given point in time. Not only do we discuss this in our on demand webinar, we actually show you how to do it during a live demo. Register now to learn how to eliminate the data gravity problem.